Programming Model

Contents

OpenCL support for Nu+

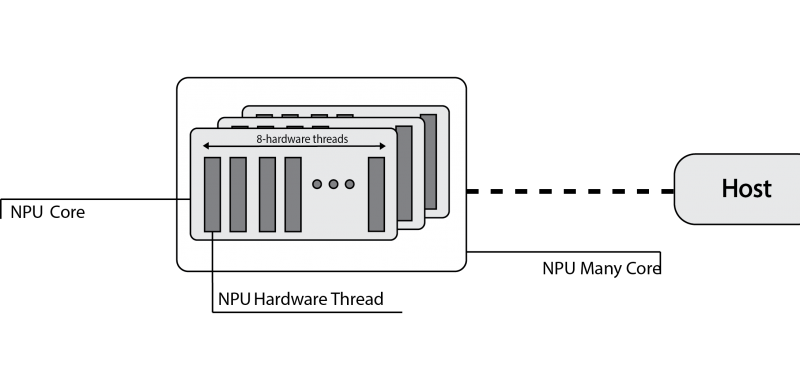

OpenCL defines a platform as a set of computing devices on which the host is connected to. Each device is further divided into several compute units (CUs), each of them defined as a collection of processing elements (PEs). Recall that the target platform is architecturally designed around a single core, structured in terms of a set of at most eight hardware threads. Each hardware threads competes with each other to have access to sixteen hardware lanes, to execute both scalar and vector operations on 32-bit wide integer or floating-point operands.

The computing device abstraction is physically mapped on the nu+ many-core architecture. The nu+ many-core can be configured in terms of the number of cores. Each nu+ core maps on the OpenCL Compute Unit. Internally, the nu+ core is composed of hardware threads, each of them represents the abstraction of the OpenCL processing element.

Execution Model Matching

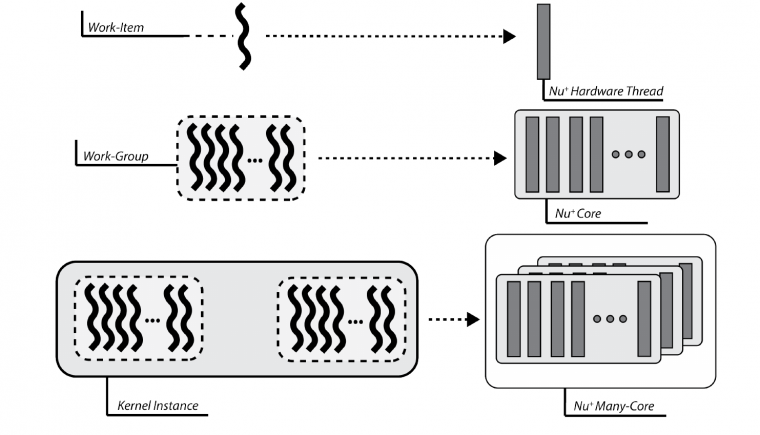

From the execution model point of view, OpenCL relies on an N-dimensional index space, where each point represents a kernel instance execution. Since the physical kernel instance execution is done by the hardware threads, the OpenCL work-item is mapped onto a nu+ single hardware-thread. Consequently, a work-group is defined as a set of hardware threads, and all work-items in a work-group executes on a single computing unit.

Memory Model Matching

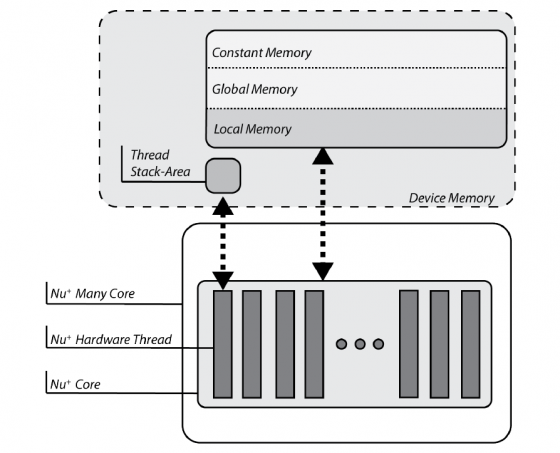

OpenCL partitions the memory in four different spaces:

- the global and constant spaces: elements in those spaces are accessible by all work-items in all work-group.

- the local space: visible only to work-items within a work-group.

- the private space: visible to only a single work-item.

The target-platform is provided of a DDR memory, that is the device memory in OpenCL nomenclature. Consequently, variables are physically mapped on this memory. The compiler itself, by looking at the address-space qualifier, verifies the OpenCL constraints are satisfied.

Each nu+ core is also equipped with a Scratchpad Memory, an on-chip non-coherent memory portion exclusive to each core. This memory is compliant with the OpenCL local memory features

Finally, each hardware thread within the nu+ core has a private stack. This memory section is private to each hardware thread, that is the OpenCL work-item, and cannot be addressed by others. As a result, each stack acts as the OpenCL private memory.

Programming Model Matching

OpenCL supports two kinds of programming models, data- and task-parallel. A data-parallel model requires that each point of the OpenCL index space executes a kernel instance. Since each point represents a work-item and these are mapped on hardware threads, the data-parallel requirements are correctly satisfied. Please note that the implemented model is a relaxed version, without requiring a strictly one-to-one mapping on data.

A task-parallel programming model requires kernel instances are independently executed in any point of the index space. In this case, each work-item is not constrained to execute the same kernel instance of others. The compiler frontend defines a set of builtins that may be used for this purpose. Moreover, each nu + core is built of 16 hardware lanes, useful to realise a lock-step execution. Consequently, OpenCL support is realised to allow the usage of vector types. As a result, the vector execution is supported for the following data types:

- charn, ucharn, are respectively mapped on vec16i8 and vec16u8, where n=16. Other values of n are not supported.

- shortn, ushortn, are respectively mapped on vec16i16 and vec16i32, where n=16. Other values of n are not supported.

- intn, uintn, are respectively mapped on vec16i32 and vec16u32, where n=16. Other values of n are not supported.

- floatn, mapped on vec16f32, where n=16. Other values of n are not supported.

OpenCL Runtime Design

OpenCL APIs are a set of functions meant to coordinate and manage devices, those provide support for running applications and monitoring their execution. These APIs also provides a way to retrieve device-related information.

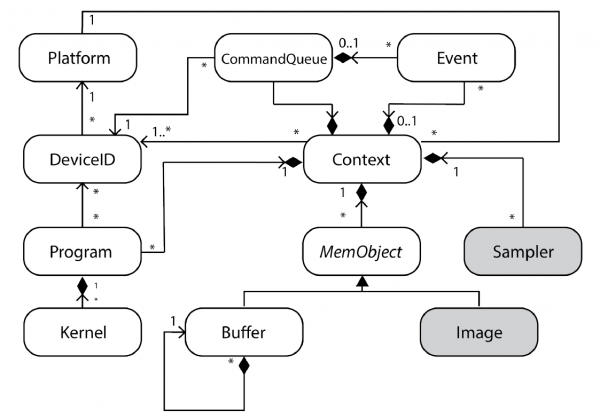

The following Figure depicts the UML Class diagram for the OpenCL Runtime as defined in the OpenCL specification. Grey-filled boxes represent not available features due to the absence of hardware support.

The custom OpenCL runtime relies on two main abstractions:

- Low-level abstractions, not entirely hardware dependent, provide device-host communication support.

- High-level abstractions, according to OpenCL APIs, administrate the life cycle of a kernel running onto the device.

OpenCL Runtime Execution Flow

The following Figure summarizes the OpenCL runtime implementation, focusing on the execution flow through different levels of the layered architecture.

The host application communicates with high-level APIs. All lower levels are hidden to it, thus, the programmer cannot directly access to the device, the only way is to pass through the filter provided by the APIs. All of these restrictions are compliant with common software engineering principles.